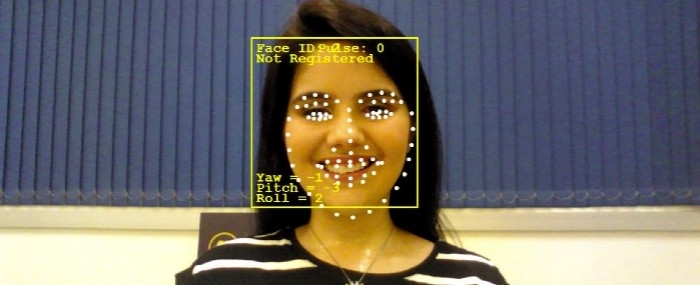

System developed by Hoobox interprets facial expressions and other behavioral cues and can be used to assess the status of patients in intensive care, babies in cribs or passengers in self-driving cars (image: Hoobox Robotics)

Published on 05/13/2021

By Elton Alisson | Agência FAPESP – At CES 2019, the latest edition of the world’s largest annual technology trade fair, the Consumer Electronics Show, held in early January in Las Vegas, Nevada (USA), Intel’s booth featured a facial recognition system that translates expressions into commands to control motorized wheelchairs.

The system was developed by Hoobox Robotics with support from FAPESP’s Innovative Research in Small Business Program (PIPE).

Based in Campinas, São Paulo State, Hoobox was selected in 2018 to join Intel’s startup accelerator program “AI for Social Good”, which promotes the development of social impact solutions using artificial intelligence (AI) and Intel’s hardware and software.

The program provides technical training and shared marketing resources, as well as the opportunity to participate in Intel Capital’s fundraising initiatives with investors, allowing startups to accelerate the development of their technological solutions.

The program enabled Hoobox’s engineers and developers to work with Intel’s technical team on ways to optimize the performance of the AI algorithms used by the system to detect and interpret facial expressions.

“There’s no exclusivity agreement. We know that the benefits of partnering with such a large organization will help us transform our facial recognition system into optimized solutions that can be produced on a large scale,” Hoobox CEO Paulo Gurgel Pinheiro told Agência FAPESP.

The system developed by Hoobox translates facial expressions into wheelchair control commands without invasive body sensors. The user’s facial expressions are captured by a camera and interpreted by AI algorithms running on a small computer mounted on the wheelchair. The algorithms convert the expressions into control commands, such as move forward or backward and turn left or right.

Currently, the technology lets users pick more than ten expressions, such as frowning, winking or smiling. It is also able to predict when the user is about to cough, sneeze or yawn and knows when the user is talking to someone else. In these situations, the link between facial recognition and wheelchair control is deactivated to prevent unintended movements and avoid accidents.

“The system is capable of capturing data for almost 100 points on the user’s face, including the shape of their mouth, nose, lips and eye cavities, with high precision,” Pinheiro said.

It is called Wheelie 7 because, as promised by Hoobox, it can be installed on any commercially available motorized wheelchair in only seven minutes.

The Wheelie 7 kit is marketed by subscription in the US only. “We don’t sell the kit itself. Customers pay a monthly fee of US$300,” Pinheiro explained.

Sixty people are currently using a prototype in the US. They include quadriplegics with spinal cord injuries, patients with neurodegenerative diseases such as amyotrophic lateral sclerosis (ALS), stroke victims and war veterans.

Those on the waiting list of more than 300 people will receive the kit in April, followed by another 500 in December. These users have already begun paying US$300 monthly fees.

“We offer this form of subscription to subsidize the cost of the kit. It also enables us to evaluate the system’s day-to-day use and improve it over time,” Pinheiro said.

The firm plans to offer the product in Brazil but believes it will have to be marketed differently. “The subscription model doesn’t seem to work well in Brazil,” Pinheiro said.

New applications

Hoobox has begun a second round of fundraising, seeking new investment of US$2.5 million by the end of this year. According to Pinheiro, this will be used to complete development and to design and test new applications of the technology.

“We’re talking to several venture capital funds in the US, China and Brazil,” he said. “We hope to make sufficient progress in these negotiations to obtain new investments and ramp up production to 3,000 units by 2020.”

At the time of the first round of fundraising, which began in 2017 shortly after the firm completed its Phase 1 proof-of-concept project for FAPESP’s PIPE program, the firm was incubated and received investment from the Albert Einstein Jewish-Brazilian Charitable Society (SBIBAE), which operates the Albert Einstein Hospital in São Paulo.

Partnership with SBIBAE enabled the firm to test new applications of its facial recognition technology for use by hospitals, including the detection of ten levels of pain and behavioral cues such as fatigue, drowsiness, agitation, sedation and spasm. The technology will be validated for the monitoring of patients in intensive care at the hospital.

“Using cameras mounted on ICU beds, our system can interruptedly monitor up to six patients at once, measuring pain levels on a scale of 1 to 10,” Pinheiro said.

“We want to use the technology not just to detect behavior but also to predict it, so as to guarantee the patient’s wellbeing and keep medical teams up to scratch with more detailed information on patient status.”

The firm is developing this project in partnership with JLABS, Johnson & Johnson Innovation’s life science startup incubator at Texas Medical Center in Houston (USA). Hoobox was selected to participate in JLABS for an indefinite period.

In addition to helping Hoobox’s team refine the usability, design and safety of the wheelchair control system, JLABS researchers have contributed to the development of an AI baby monitor based on the same system.

“The idea is to use facial recognition to detect whether the baby is asleep or awake during the night, for example, or the baby’s position in the crib—whether it’s lying, sitting or standing,” Pinheiro said.

In partnership with a Silicon Valley-based firm (which cannot be named for confidentiality reasons), Hoobox will start work in April on a project to monitor passengers in self-driving cars, for which it will adapt its human behavior detection technology.

The goal is to use the technology to monitor attention, fatigue and other states experienced by front-seat passengers in self-driving cars to determine whether they are fit to take control in an emergency or to address a detected risk.

“The startup we’re partnering with has developed technology for self-driving cars and needs a high-precision facial recognition system to monitor and detect the behavior of passengers in this type of vehicle,” Pinheiro said.

He added that Hoobox’s facial recognition technology works in the dark just as well as in brightly lit environments, and the head being observed can be in any position. This contrasts with face frame technology, which requires subjects to look head-on at the camera (as in a “mug shot”) in order to be recognized. Hoobox’s identification system dispenses with face framing.

“Our system can recognize a person’s face even if they’re sideways, at an angle of up to 60 degrees, or walking,” Pinheiro said.

These characteristics of the technology have caught the interest of companies in China, and Hoobox is opening an office in the city of Suzhou, mainly to serve clients in the security sector.

“We already have clients in Suzhou and other Chinese cities. We plan to implement the technology in security and access control projects, as well as a pilot project that involves monitoring patients in an intensive-care unit,” Pinheiro said.

Source: https://agencia.fapesp.br/29866